Using a Serverless Architecture to deliver IRC Webhook Notifications

Feb 28, 2016 using tags aws, lambda, serverless, irchooky 6minIn this blog post I would like to explore the concept of a Serverless Architecture and relate it back to a project that I have been working on – IRC Hooky.

If you’re not entirely familiar with some of these concepts, this isn’t a problem at all! I will do my best to explain these moving pieces and how they fit together.

Serverless what?

In general, the notion of serverless architecture refers to running application code on infrastructure that is fully managed by other people.

What this means is that your application, and by extension you, do not need to worry about the underlying infrastructure it is running on. In a model like this, the application for the most part just assumes that it has the underlying machine/network/infrastructure/scaling resources it needs and only concerns itself with higher-order logic.

In a traditional Amazon world with EC2 instances and autoscaling groups, the physical hardware component has been abstracted out into virtual components, but infrastructure and resources are very much still a thing that the people using them need to actively manage.

A serverless world promises to be this magical land where network/infrastructure/scaling will Just Work and the only thing that will be an application developer’s concern is the application itself.

The Bigger Picture

In April of last year, Amazon announced that Lambda was generally available. It didn’t seem like a big deal to me at the time as I couldn’t really think of interesting use-cases to experiment with.

I felt that Lambda (at that time) was not something that was relevant to most people. I recall it was marketed as event-driven functions for things such as:

- Automatically processing files uploaded to S3

- Acting on specific CloudWatch events

- etc.

This wasn’t very compelling to me. I recall thinking that this would be an interesting use case for a “serverless cron” sort of system, but even that lacked the scheduler aspect (keep in mind that Unreliable Town Clock was not yet a thing).

Amazon Announced API Gateway

Then in early July they announced another product called API Gateway. Among other things, it was now possible to trigger Lambda functions through API Gateway HTTP calls.

The concept of a REST-backend powered by these Lambda functions was intriguing to me!

- No machines to manage ✔

- Pay only for resources consumed ✔

- Boilerplate for HTTP (verb) endpoints ✔

I was smitten!

Definitions

Cool. So let’s explore what Lambda and API Gateway are all about.

AWS Lambda lets you run code without provisioning or managing servers. You pay only for the compute time you consume

Lambda functions are designed to respond to one or more AWS events. They are time-bound to 5 minutes and you only pay for the resources you consume. Lambda currently supports writing functions in Java, Node.js, and Python. Having to only pay for the resources you consume is compelling because you’re incentivized to keep your functions lean in order to manage costs.

Amazon API Gateway is a fully managed service that makes it easy for developers to create, publish, maintain, monitor, and secure APIs at any scale.

The primary selling point for API Gateway is how easy it is to setup an endpoint. You are given the ability to implement caching and basic API throttling without much effort – this is a far cry from having to setup an nginx server and doing this yourself.

Why does this matter

In a traditional machine leasing model, one has to pay for the time in which a box is leased. This cost does not account for the fact that this box was idle for 60% of that time (for example). Committing to leasing a box means that you eat the cost of idle time.

The beauty of Lambda and API Gateway is that it makes this hosting model somewhat obsolete.

What is IRC Hooky

IRC Hooky is a framework for Lambda-driven IRC notifications. It currently supports Webhook events from Github and Hashicorp Atlas.

At its core, IRC Hooky is a web server that listens for incoming HTTP requests and acts on them accordingly.

It was born out of my need to receive the the same sort of GitHub-notification integration I was used to in Slack. This would have been immensely easier if deployed on Heroku or a traditional EC2 instance but the ongoing cost of a passive service like this (yes, even the $7.00/month) was not worth it to me.

And seriously what fun would that have been?!

This felt like the perfect use-case for a Lambda/API Gateway driven backend!

How does it work

It basically works as follows:

- Internet service X performs a POST request on the IRC Hooky endpoint

- API Gateway receives this request and triggers the IRC Hooky Lambda function

- IRC Hooky Lambda function validates the payload, asynchronously sends the IRC message, and returns a 200 (this 200 propagates back to the caller)

(More details available on the IRC Hooky overview page)

After IRC Hooky receives and processes the event, the resulting message will look something this this in your IRC client:

15:52:43 < irchooky> GitHub Pull Request "Quiet down the Travis build logs" opened by marvinpinto https://github.com/marvinpinto/kitchensink/pull/15How much does running this cost?

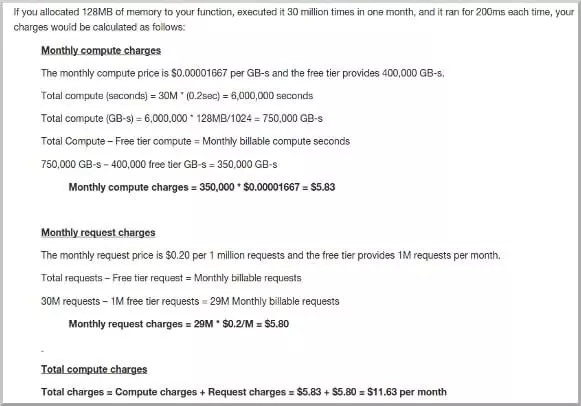

The economics of running IRC Hooky (or other Lambda functions) at scale is what is most appealing about this architecture. To give you an idea of what this means, here is a screenshot from the Lambda pricing example page.

128MB Lambda functions are allowed to consume ~889 hours per month (37 days) without charge, along with the first million requests free. API Gateway, on the other hand, charges $3.50 per million API calls, along with a a free-tier of a million API calls per month (for the first 12 months).

What does all this mean?

For most folks, IRC Hooky will happily survive on AWS’ Lambda free-tier. The component here that will cost more than $0.00 per month is API Gateway ($0.0000035/request).

Estimating an exaggerated 100K API calls per month will ding you ~$0.35 (per month) with API Gateway.

Pretty neat, huh!

Get Involved!

So! If IRC Hooky sounds cool to you, I invite you to contribute in any way you would like!

- Questions? IRC and/or email work best for me!

- Bugs? Send ‘em over

- New backend? Schweet!

- Code/Documentation patches? Shipit

Parting Thoughts

I think that Lambda functions and other forms of serverless architecture will have their place in our infrastructure. It will be another tool we’ll use to abstract out logic and reduce costs (complexity or otherwise).

We have a long way to go before this becomes mainstream but given the innovation thus far by Amazon and other companies in this space (like Google), I’m excited about the possibilities!

The banner for this post was originally created by Aaron Burden and licensed under CC0 (via unsplash)